Approximate Dynamic Imputation

As stated earlier, any substantial improvement in the performance of missing values processing comes at a high computational cost. Thus, we recommend an alternative workflow for networks with a large number of nodes and many missing values. The proposed approach combines the efficiency of Static Imputation with the imputation quality of Dynamic Imputation.

Static Imputation is efficient for learning because it does not impose any additional computational cost on the learning algorithm. With Static Imputation, missing values are imputed in memory, which makes the imputed dataset equivalent to a fully observed dataset.

Even though, by default, Static Imputation runs only once at the time of data import, it can be triggered to run again at any time by selecting Menu > Learning > Parameter Estimation. Whenever Parameter Estimation is run, BayesiaLab computes the probability distributions on the basis of the current model. The missing values are then imputed by drawing from these distributions. If we now alternate structural learning and Static Imputation repeatedly, we can approximate the behavior of the Dynamic Imputation method. The speed advantage comes from the fact that values are now only imputed (on demand) at the completion of each full learning cycle instead of being imputed at every single step of the structural learning algorithm.

Usage

As a best-practice recommendation, we propose the following sequence of steps:

- In Step 3 of the Data Import Wizard, we choose Static Imputation (standard or entropy-based). This produces an initial imputation with the fully unconnected network, in which all the variables are independent.

- We run the Maximum Weight Spanning Tree algorithm to learn the first network structure.

- Upon completion, we prompt another Static Imputation by running Parameter Estimation. Given the tree structure of the network, pairwise variable relationships provide the distributions used by the Static Imputation process.

- Given the now-improved imputation quality, we start another structural learning algorithm, such as EQ, which may produce a more complex network.

- The latest, more complex network then serves as the basis for yet another Static Imputation. We repeat steps 4 and 5 until we see the network converge toward a stable structure.

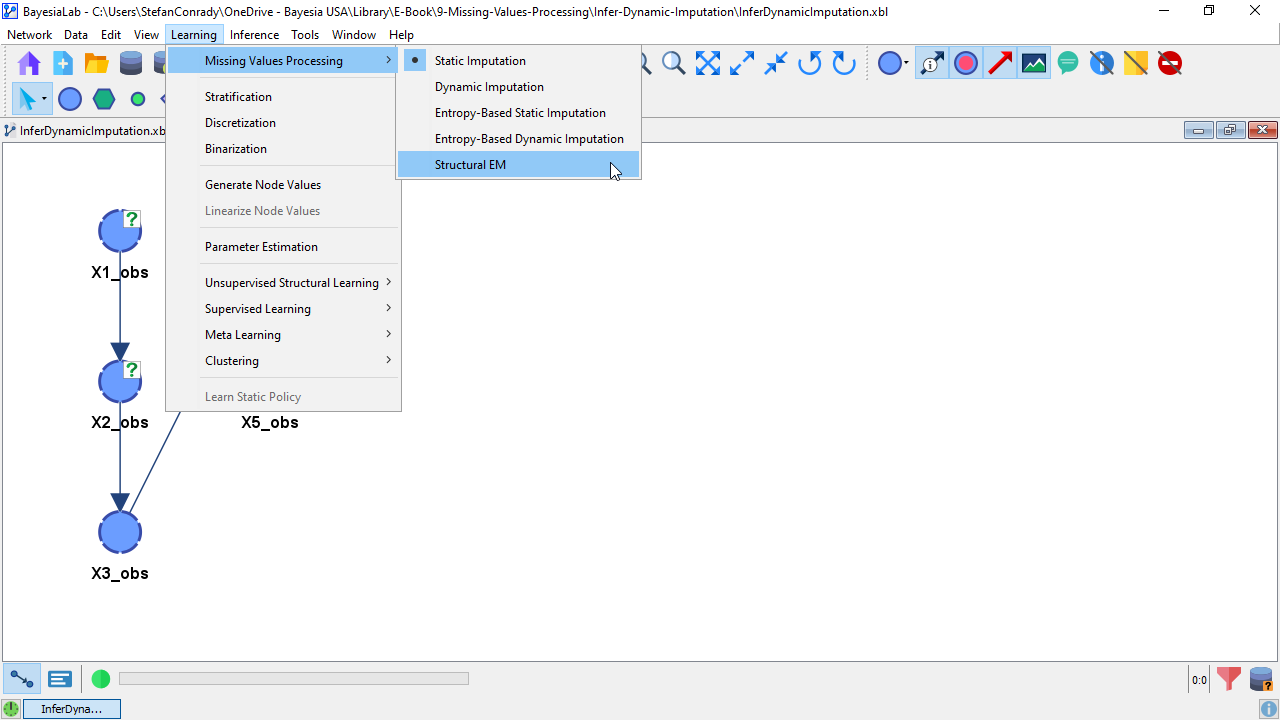

- With a stable network structure in place, we change the imputation method from Static Imputation to Structural EM via

Menu > Learning > Missing Values Processing > Structural EM.

While this Approximate Dynamic Imputation workflow requires more input and supervision by the researcher, for learning large networks, it can save a substantial amount of time compared to using the all-automatic Dynamic Imputation or Structural EM. Here, “substantial” can mean the difference between minutes and days of learning time.