Embedding Generator

Context

- In machine learning and Natural Language Processing (NLP), embedding is a mathematical representation of a token, word, phrase, sentence, or any other linguistic unit with a continuous high-dimensional vector. Word embeddings, in particular, are widely used representations that capture the semantic and syntactic properties of words.

- The embeddings used by Hellixia have 1,536 dimensions and allow capturing the semantics of the linguistic units defined by the nodes (names, long names, comments).

Usage, Workflow, and Example

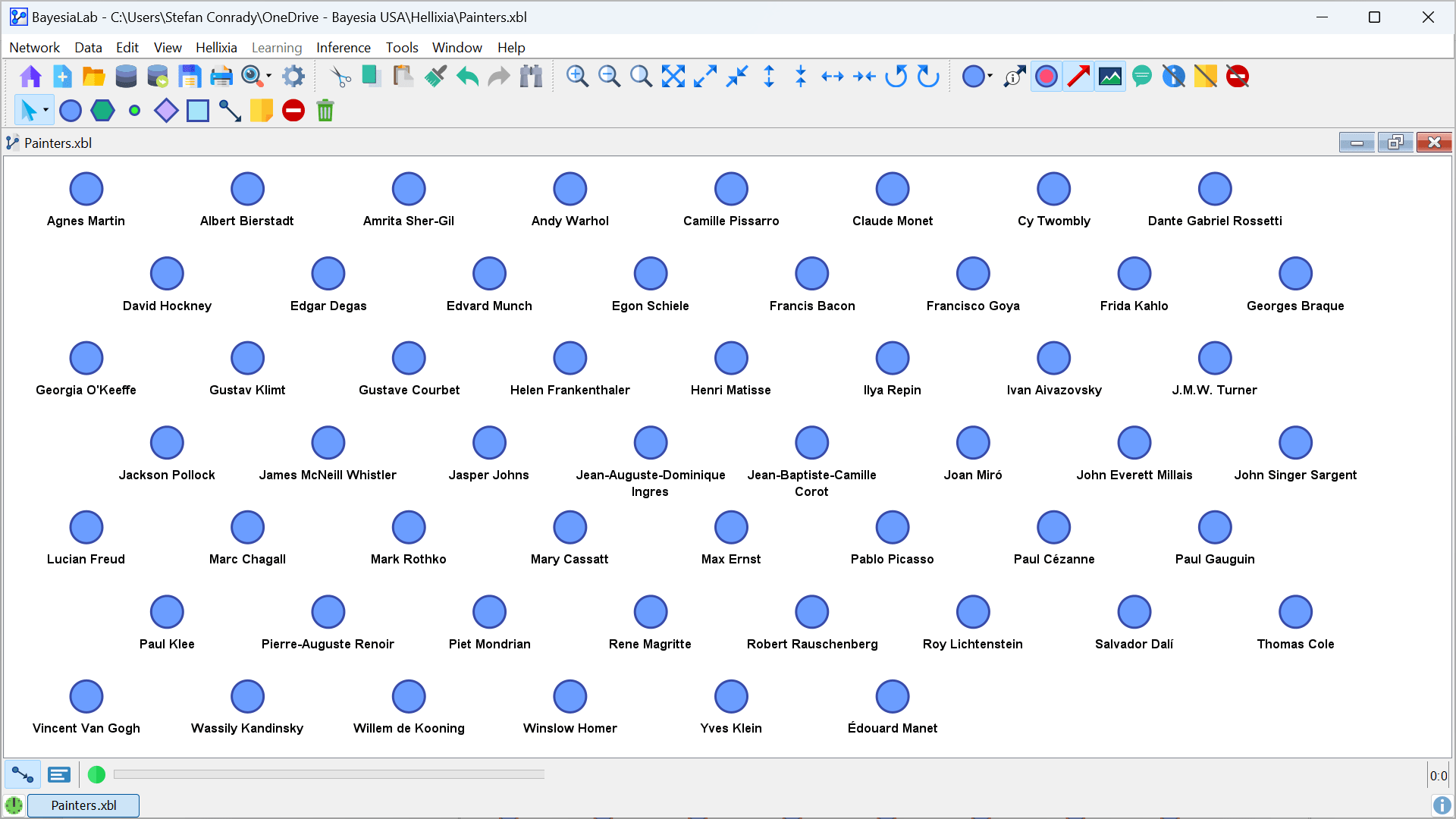

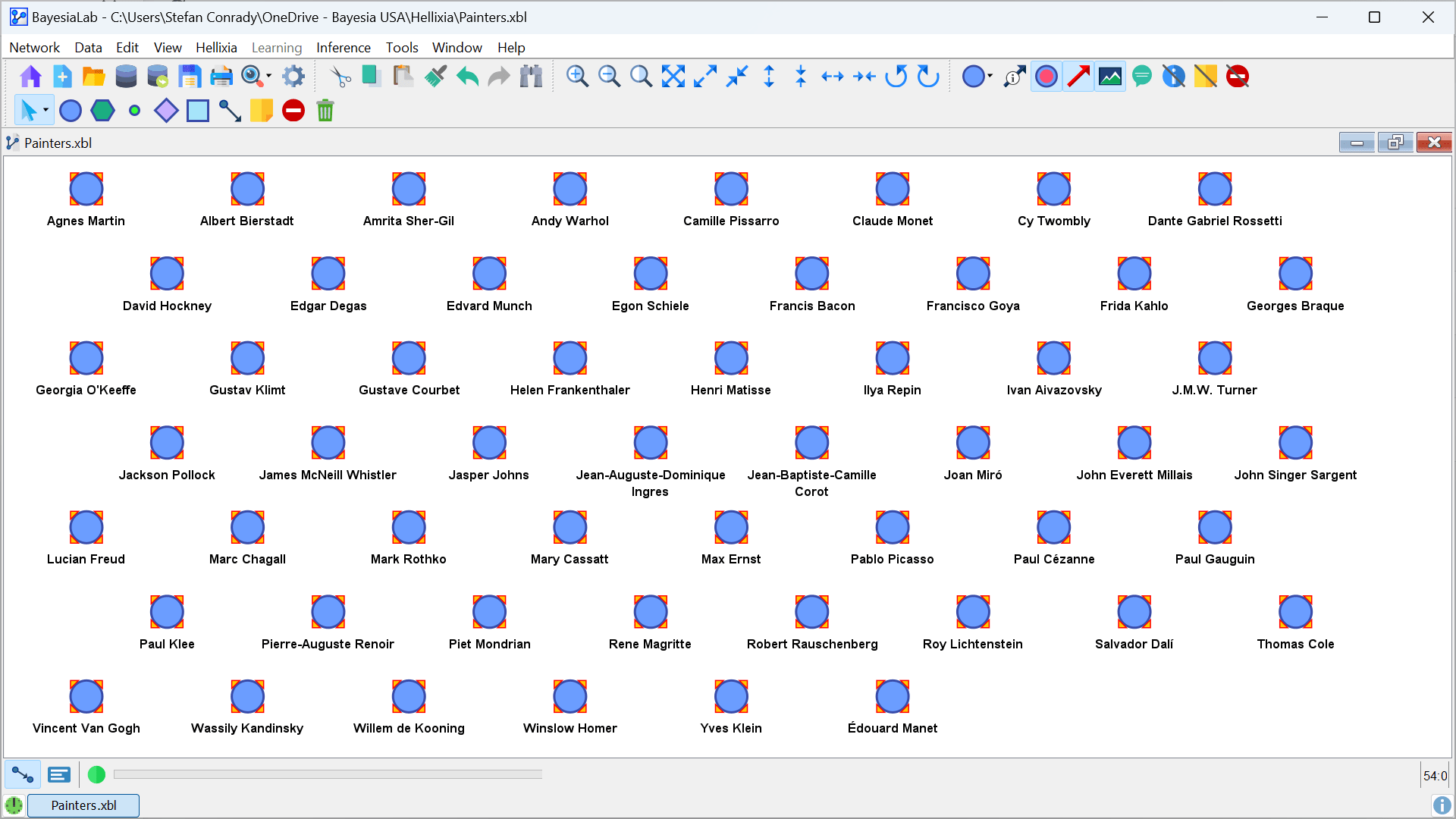

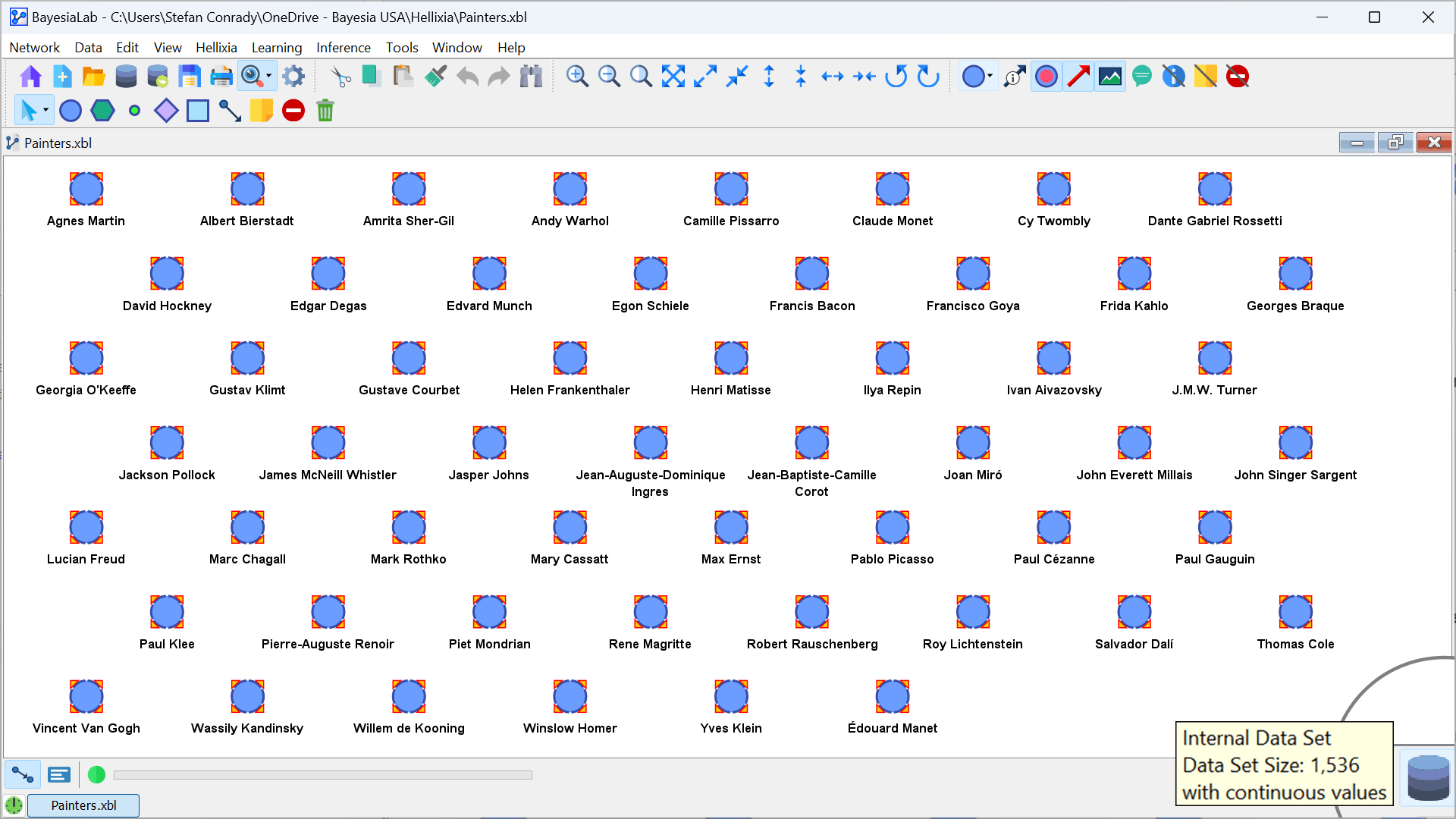

- To demonstrate the workflow for generating embeddings, we start with a set of 54 nodes representing a selection of influential 19th and 20th-century painters.

- In Modeling Mode

F4, select the nodes on the Graph Panel for which you want to generate embeddings. In our example, we select all 54 nodes.

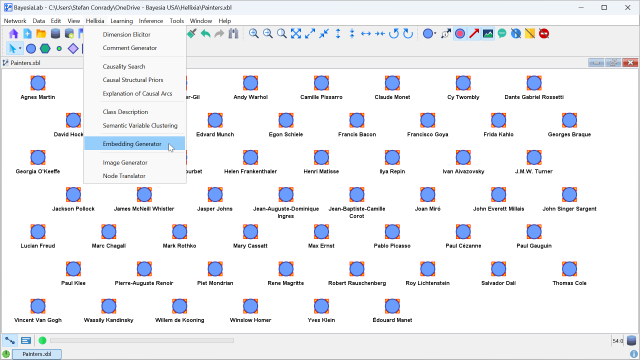

- Go to

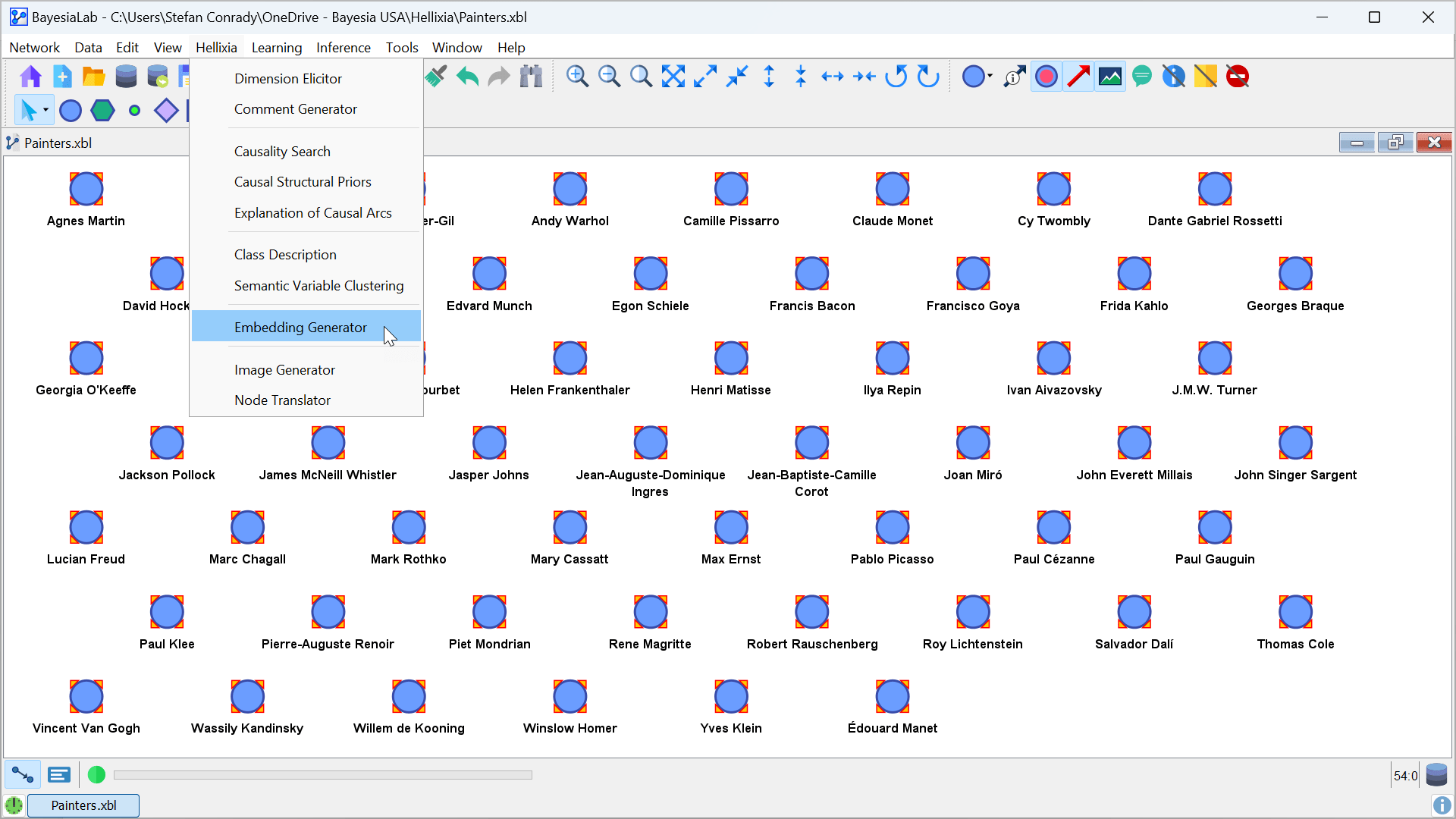

Menu > Hellixia > Embedding Generator.

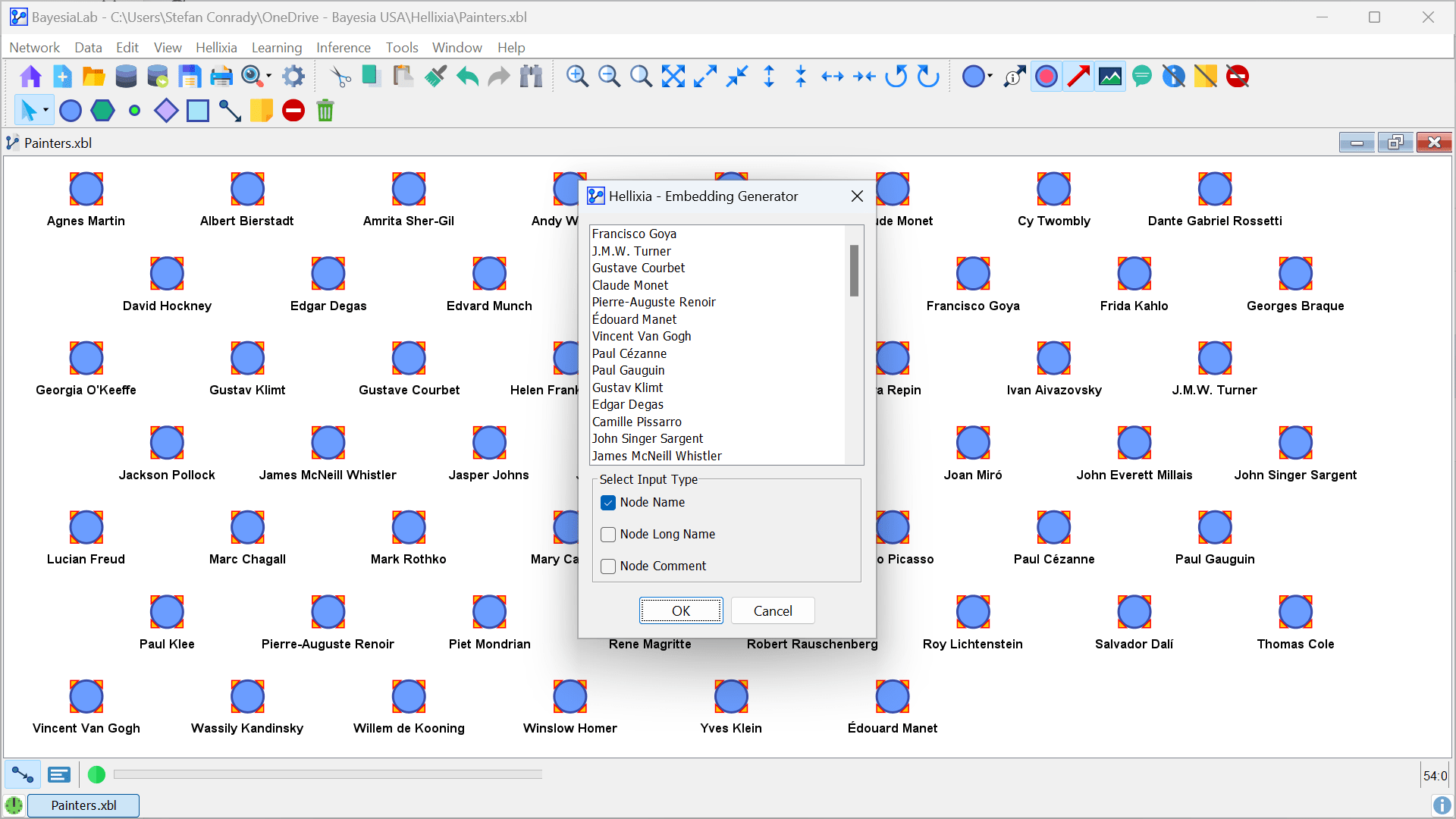

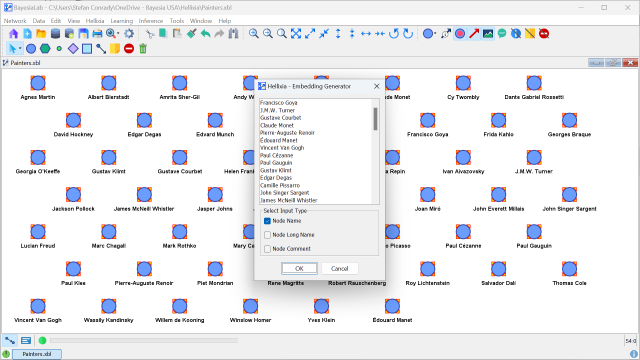

- Select one or more Input Types from the Hellixia Embedding Generator Window, i.e., Node Name, Node Long Name, and Node Comment. In the example, only Node Names are defined, so that is the only Input Type you need to select.

- Click OK.

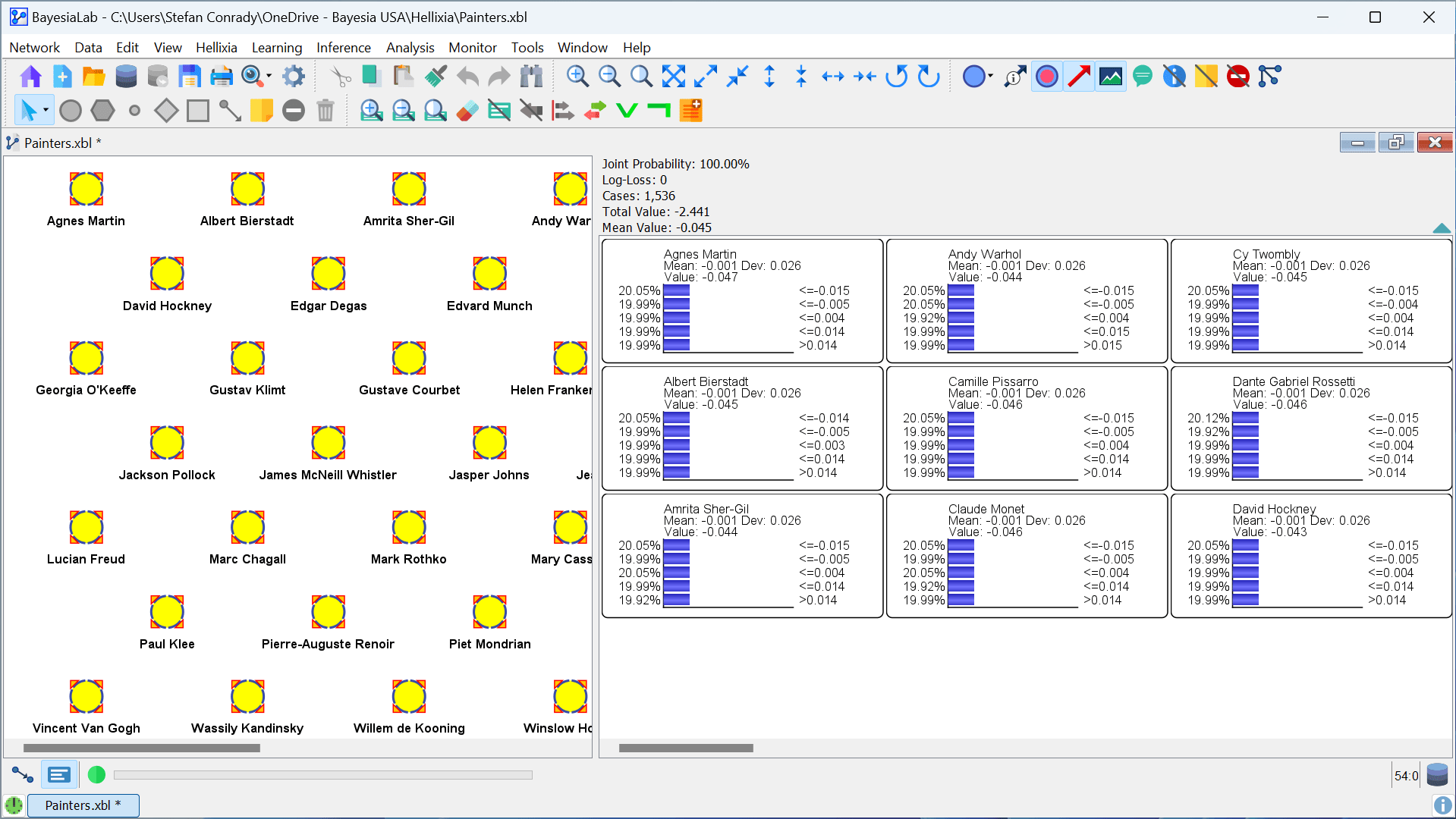

- Upon retrieving the embeddings, the Main Window shows the database icon in the bottom right corner. This indicates that the embeddings are now attached as a dataset.

- Each node now has 1,536 observations, which is indicated by the Tooltip associated with the database icon.

- By default, the observations associated with each node are discretized into quintiles, which you can see by switching into Validation Mode

F5and bringing up any of the Monitors.

Application Example: Learning a Semantic Network

A semantic network is a graphical representation of knowledge or concepts organized in a network-like structure. It is a form of knowledge representation that depicts how different concepts or entities are related to each other through meaningful connections.

In a semantic network, concepts are represented as nodes, and their relationships are depicted as labeled links or arcs. These links indicate the connections or associations between the concepts, such as hierarchical, associative, or causal relationships.

- With the embeddings now stored as observations, we can machine-learn a semantic network.

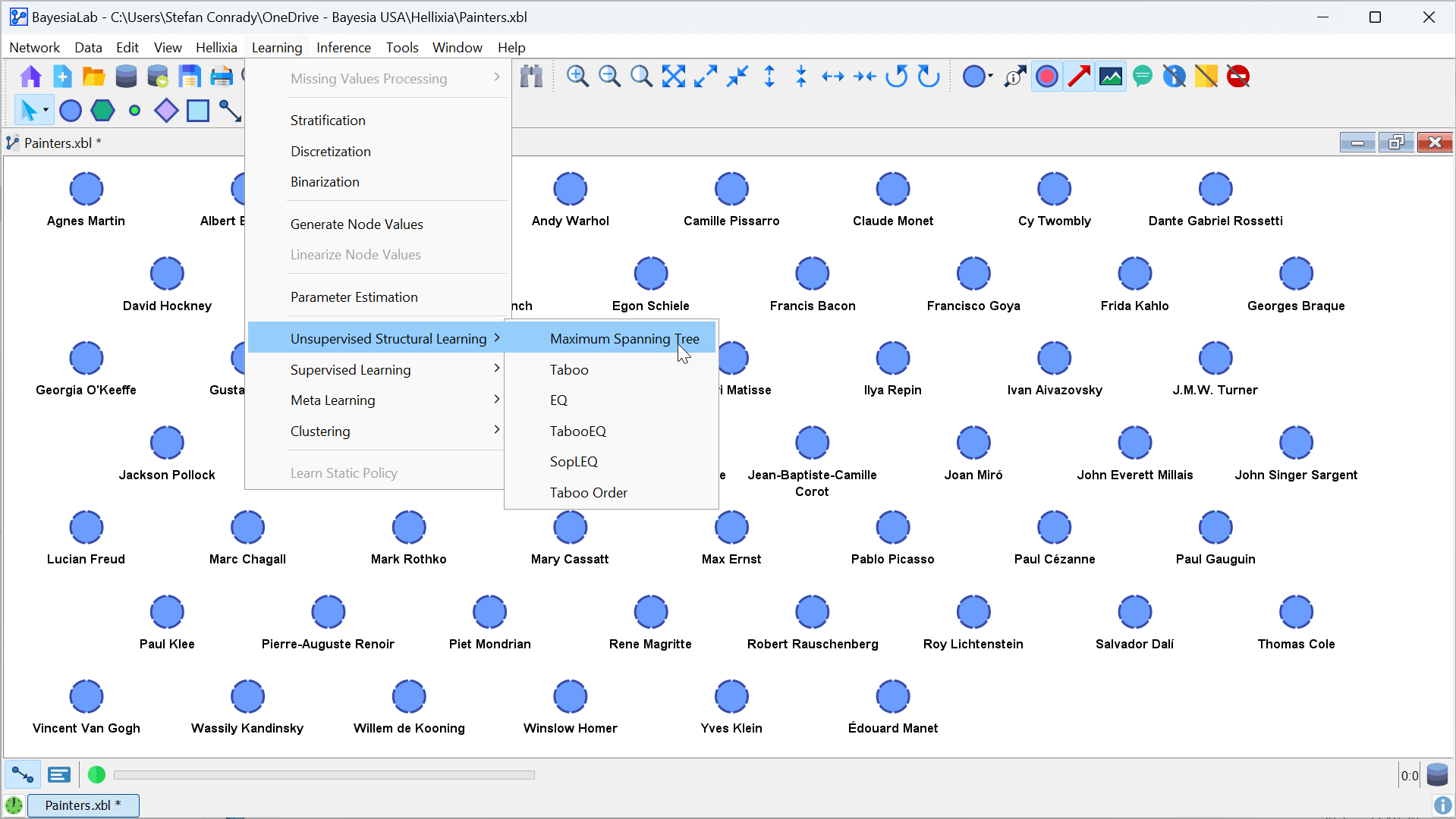

- For this purpose, we use one of BayesiaLab’s Unsupervised Learning algorithms.

- The Maximum Weight Spanning Tree (MWST) is the best choice in this context. The algorithm is quick and renders an easily interpretable network.

- From the Modeling Mode

F4, selectMenu > Learning > Unsupervised Structural Learning > Maximum Spanning Tree.

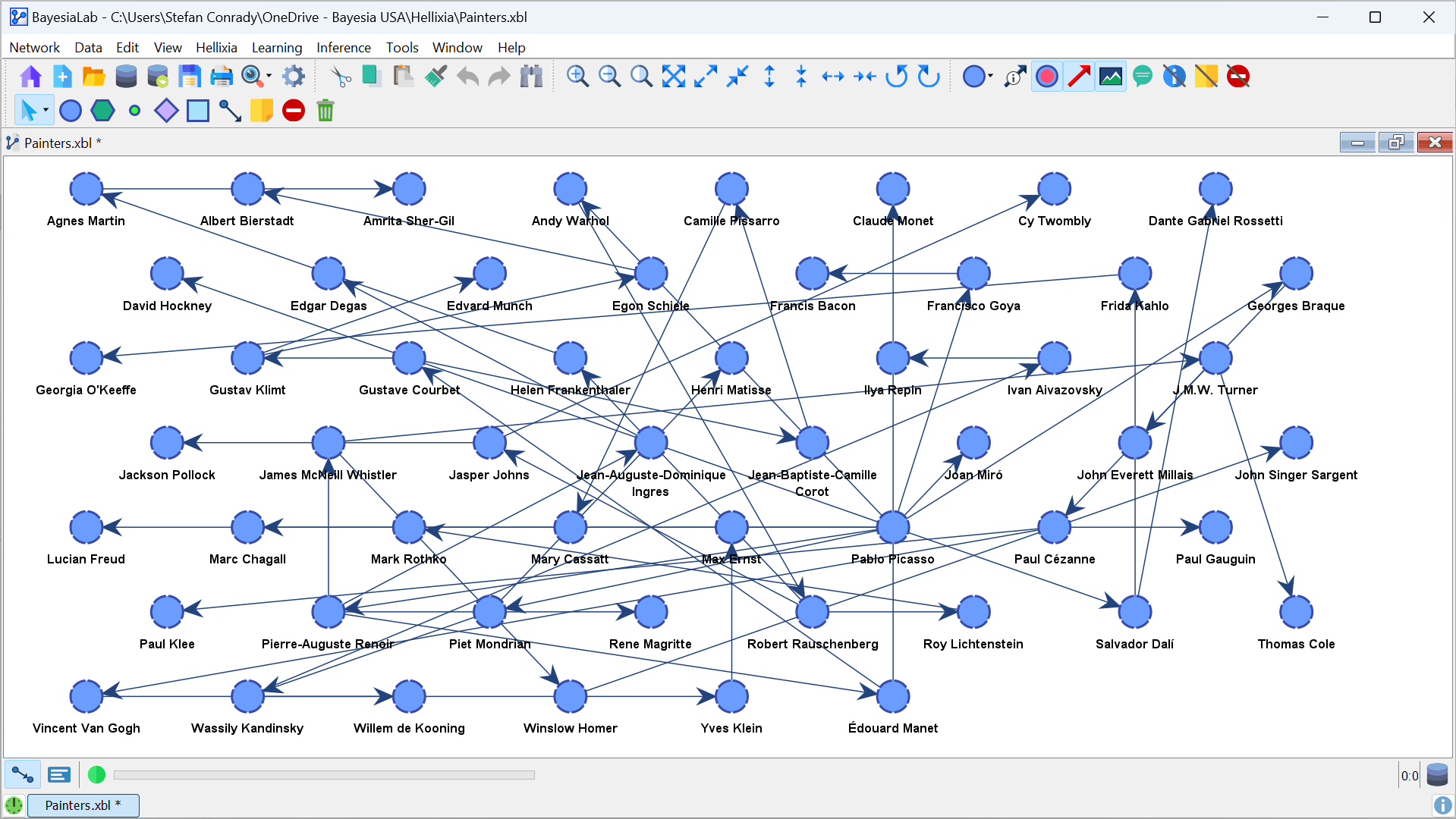

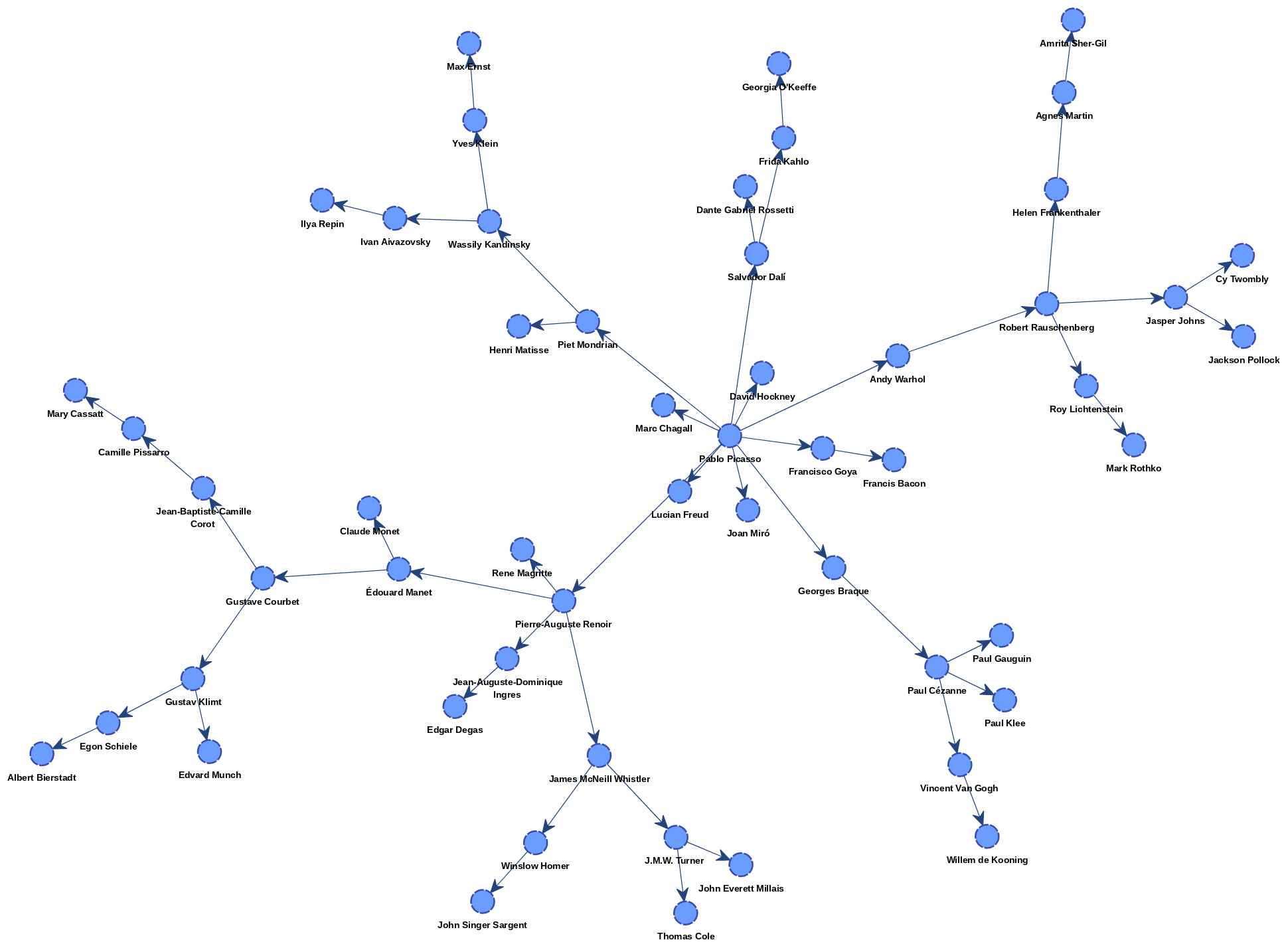

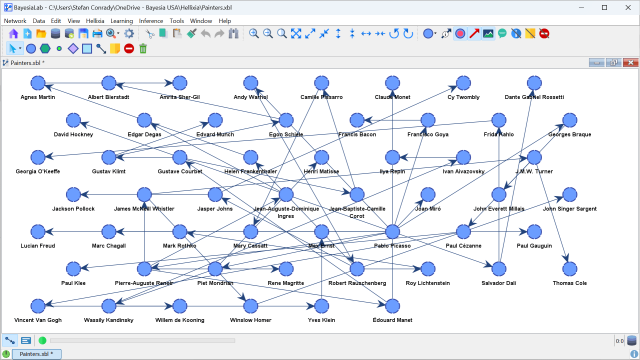

- After the learning is completed, the resulting network appears in the following screenshot:

- We can apply one of BayesiaLab’s layout algorithms to interpret this graph more easily.

- For instance, select

Menu > View > Layout > Symmetric Layout. - The resulting graph is shown outside the BayesiaLab window so that its structure can be viewed and interpreted more easily.